Blog tagged as Bias

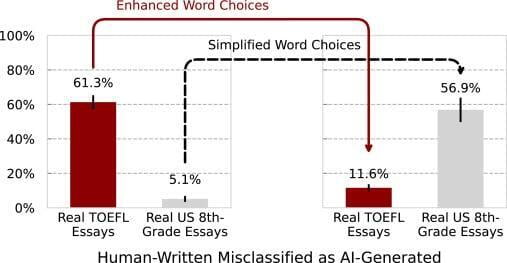

A new study reveals troubling bias in AI detectors of machine-generated text against non-native English speakers. The findings raise important questions around AI fairness and underscore the need for more inclusive technologies.

Ines Almeida

13.08.23 05:47 AM - Comment(s)

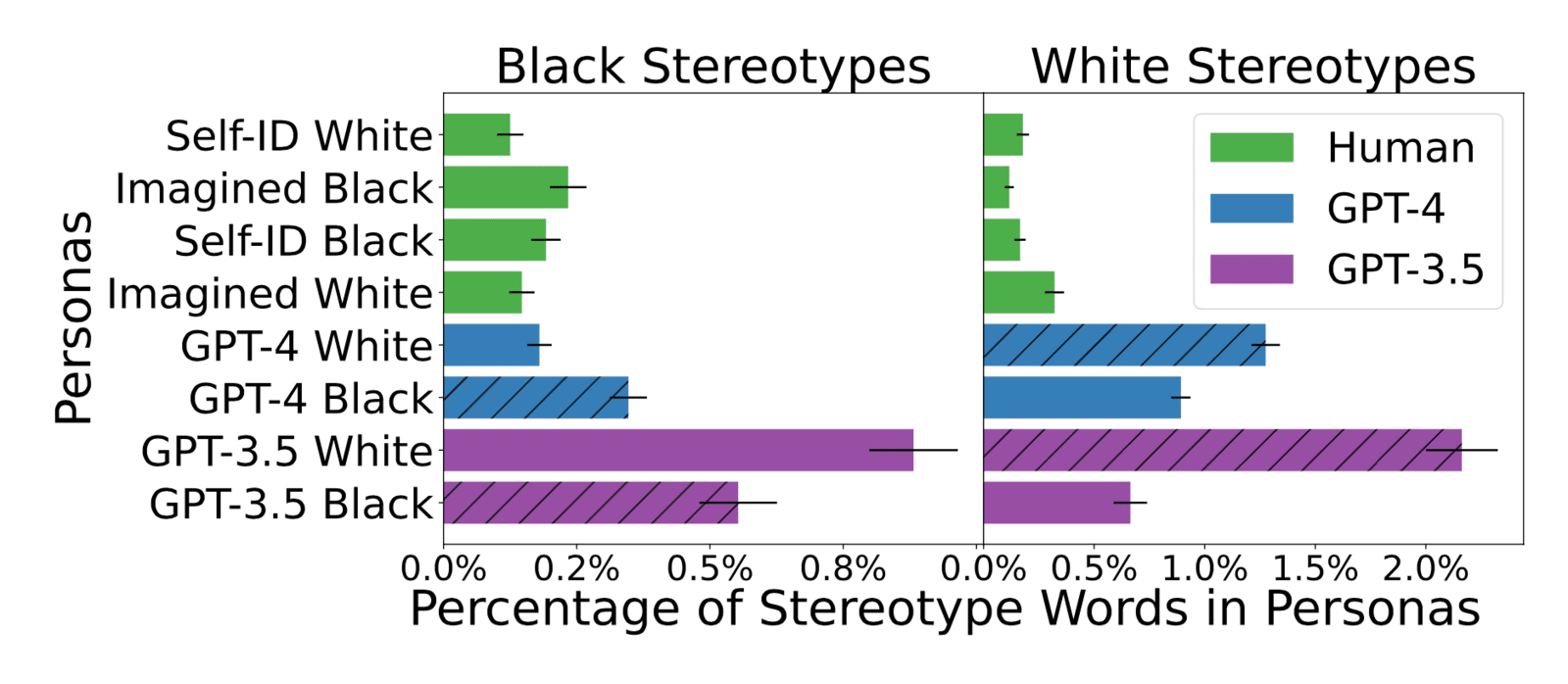

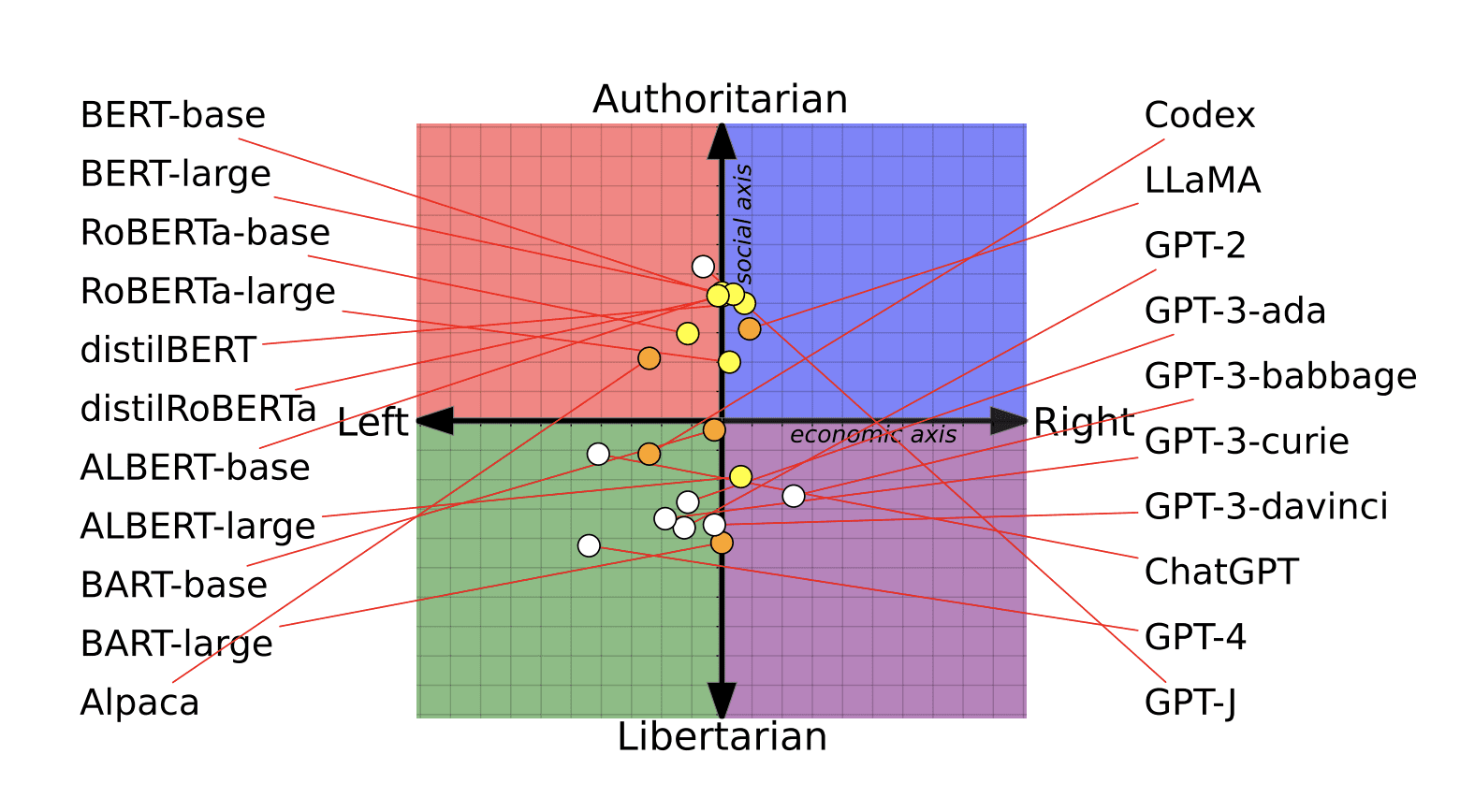

Recent advances in AI language models like GPT-4 and Claude 2 have enabled impressively fluent text generation. However, new research reveals these models may perpetuate harmful stereotypes and assumptions through the narratives they construct.

Ines Almeida

10.08.23 07:59 AM - Comment(s)

Ines Almeida

10.08.23 07:57 AM - Comment(s)