Blog tagged as Explainability

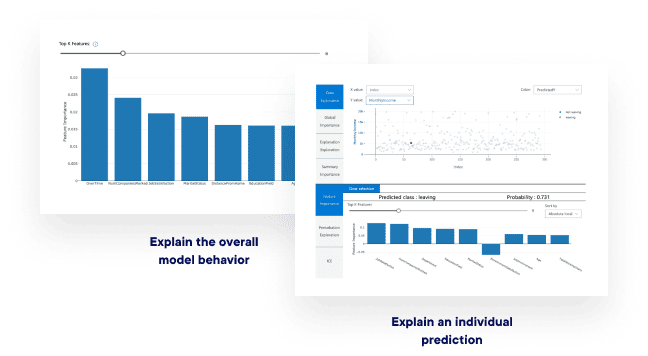

InterpretML is a valuable tool for unlocking the power of interpretable AI in traditional machine learning models. While it may have limitations when it comes to directly interpreting LLMs, the principles of interpretability and transparency remain crucial in the age of generative AI.

Ines Almeida

04.04.24 12:45 PM - Comment(s)

A new study has shown that transformers can be expressed in a simple logic formalism. This finding challenges the perception that transformers are inscrutable black boxes and suggests avenues for interpreting how they work.

Ines Almeida

13.08.23 07:50 PM - Comment(s)

A new study has shown that transformers can be expressed in a simple logic formalism. This finding challenges the perception that transformers are inscrutable black boxes and suggests avenues for interpreting how they work.

Ines Almeida

13.08.23 07:50 PM - Comment(s)

Ines Almeida

12.08.23 09:22 AM - Comment(s)

Backpack models have an internal structure that is more interpretable and controllable compared to existing models like BERT and GPT-3.

Ines Almeida

10.08.23 07:59 AM - Comment(s)

Ines Almeida

10.08.23 07:57 AM - Comment(s)

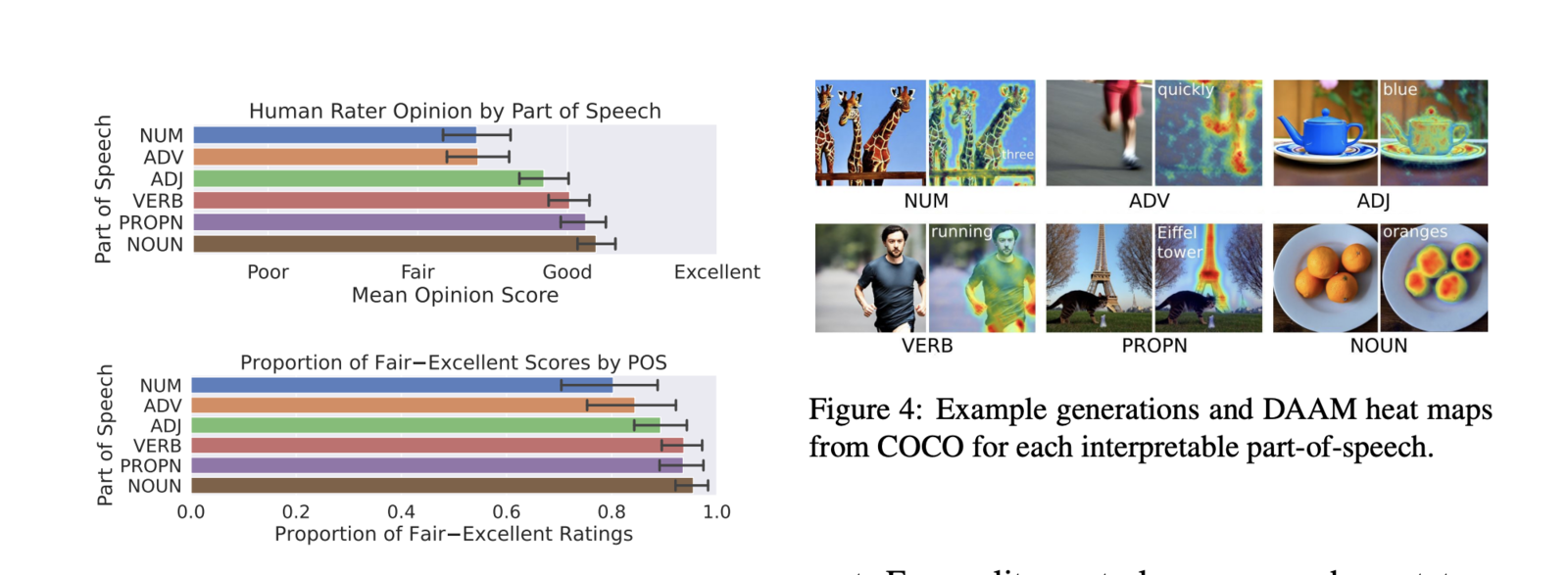

In a paper titled "What the DAAM: Interpreting Stable Diffusion Using Cross Attention", researchers propose a method called DAAM (Diffusion Attentive Attribution Maps) to analyze how words in a prompt influence different parts of the generated image.

Ines Almeida

08.08.23 04:59 PM - Comment(s)